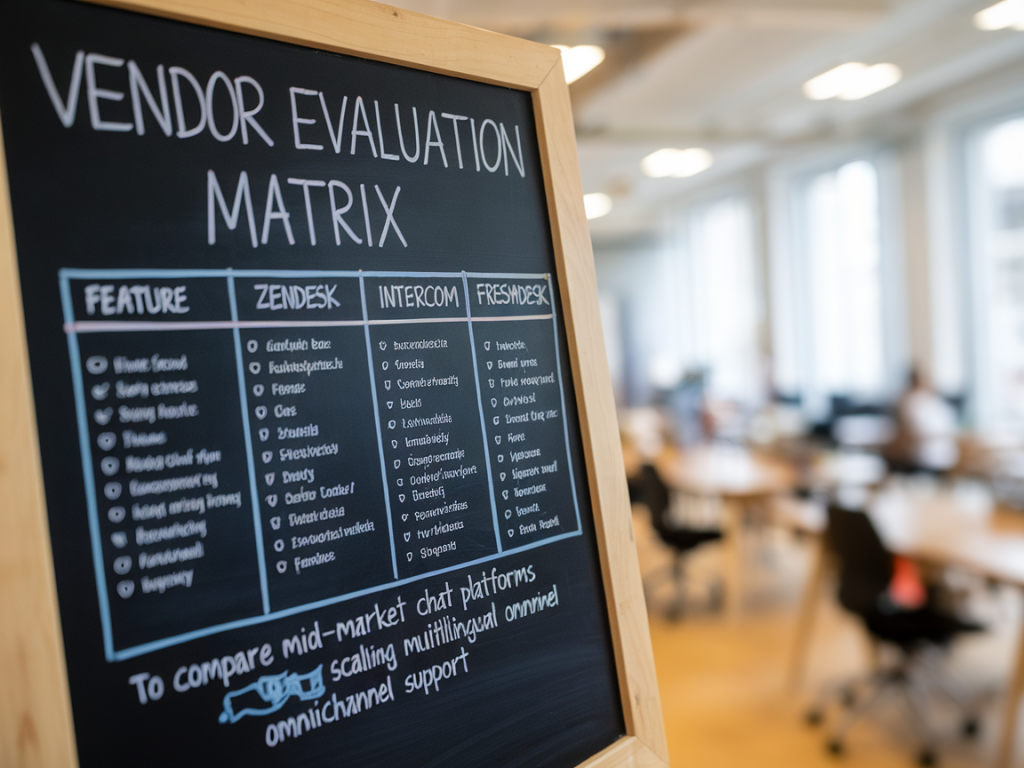

I’ve evaluated dozens of chat and messaging platforms while helping support teams scale omnichannel programs across Europe. When you’re operating in the mid-market — not a tiny startup but not a global enterprise either — the choice between Zendesk, Intercom, and Freshdesk often comes down to a combination of product fit, localisation capabilities, automation flexibility, and predictable total cost of ownership.

Below I share a practical vendor evaluation matrix I use with teams that need to support multiple languages, channels (web chat, WhatsApp, social DMs, email), and a growing volume of conversations without blowing the budget. I’ll walk through the dimensions that matter, how each vendor typically performs, and a sample matrix you can adapt to your own weighting.

Why a focused matrix matters for multilingual omnichannel support

Generic comparisons rarely surface the trade-offs that matter to support leaders: how easy is it to route conversations in Portuguese vs Polish, what happens to SLA tracking when conversations hop channels, how does automation handle language-specific intents, and how much engineering time will be needed to stitch things together?

I build matrices to answer three pragmatic questions:

- Can the platform handle multiple channels with a unified agent surface? Agents shouldn’t have to switch tools or tabs for WhatsApp vs email vs in-app chat.

- Can the platform support multiple languages natively or via integrated translation and NLU? Auto-translation, language detection and language-specific routing make a measurable difference.

- What’s the operational cost and complexity to scale? Licensing, API rate limits, bot authoring, reporting and integrations all affect ongoing cost.

Evaluation dimensions I include

Here are the dimensions I use when building a vendor scorecard for mid-market teams. You’ll notice I weight practical ops and language support higher than shiny features.

- Channel coverage — Web, mobile SDKs, WhatsApp, Facebook/IG, SMS, email, and digital wallets.

- Unified Inbox/Agent UX — How well agents handle cross-channel context and conversation threads.

- Multilingual support — Language detection, translation (auto + human handover), locale-aware routing, and NLU quality for multiple languages.

- Automation & Bots — Bot-building tools, multilingual bot flows, integrations with third-party NLU (e.g., Dialogflow, Rasa) and switching to human agents.

- Routing & Workflow — Skills-based routing, SLA management across channels, time zones, and language queues.

- Reporting & Analytics — Omnichannel metrics, language-level KPIs, conversational retention and deflection rates.

- Integrations & Extensibility — API maturity, webhooks, marketplace apps, and how easy it is to integrate translation providers or CRM data.

- Cost predictability — Licensing model (per-seat, per-conversation), add-on fees for channels like WhatsApp, and translation costs.

- Implementation effort — Time to production, need for professional services, and required engineering support.

Quick vendor snapshot (typical strengths & caveats)

- Zendesk — Strong omnichannel backbone and mature routing/reporting. Good for teams that want an enterprise-grade ticketing model with comprehensive channel support. Historically stronger in ticket-based workflows than in conversational-first productised bots; recent product updates have narrowed that gap. Pricing can escalate with add-ons like WhatsApp and advanced reporting.

- Intercom — Conversational-first UX built for product-led teams. Excellent in-app messaging and proactive engagement flows, with polished bot-building for common use cases. Multilingual capabilities exist but often require third-party translation or custom NLU to scale beyond major languages. Pricing is flexible but can become expensive as you enable advanced features and add seats.

- Freshdesk — Value-oriented with solid channel coverage and a straightforward agent workspace. Freshdesk is competitive on price and provides automation and multilingual features via Freshchat and integrations. It can be a good fit for mid-market teams that prioritise cost-effective scaling over cutting-edge conversational features.

Sample vendor evaluation matrix

Below is a simplified scoring matrix. Score each vendor 1–5 for each dimension (5 = excellent fit). Apply weights based on your priorities (e.g., give multilingual support double weight if you cover 6+ locales).

| Dimension | Weight | Zendesk (score) | Intercom (score) | Freshdesk (score) | Weighted totals |

|---|---|---|---|---|---|

| Channel coverage | 1.2 | 4 | 3.5 | 3.5 | |

| Unified agent UX | 1.0 | 4.5 | 4.0 | 3.5 | |

| Multilingual support | 1.5 | 4.0 | 3.0 | 3.5 | |

| Automation & bots | 1.3 | 3.5 | 4.5 | 3.5 | |

| Routing & SLA | 1.2 | 4.5 | 3.5 | 3.5 | |

| Reporting & analytics | 1.1 | 4.5 | 3.5 | 3.0 | |

| Integrations & APIs | 1.0 | 4.0 | 4.0 | 3.5 | |

| Cost predictability | 0.9 | 3.0 | 3.5 | 4.0 | |

| Implementation effort | 1.0 | 3.5 | 4.0 | 3.5 | |

| Total | — | — | — |

Tip: adapt the weights to match your priorities. If multilingual routing is critical, increase that weight to 2.0 or higher. If cost drives the decision, increase cost predictability weight.

How I interpret typical results for mid-market teams

When I run this matrix with clients, three common outcomes emerge:

- Zendesk wins when you need robust ticketing, enterprise-ready routing and reporting, and you already prioritise structured SLAs and compliance. It’s strong for multilingual teams if you add a translation layer (native auto-translate helps but careful handover flows are needed).

- Intercom wins when your product is central to support and you want a conversational-first experience with great in-app targeting and proactive messaging. Intercom’s bot tooling is excellent for in-flow self-service, but expect to invest in third-party NLU or translations for less common languages.

- Freshdesk wins when budget is tighter and you need solid omnichannel capabilities with reasonable automation. It’s often the most cost-effective to pilot multilingual coverage and scale in predictable steps.

Operational considerations that change the score

A few operational realities often shift the preferred vendor:

- Volume mix — High WhatsApp volume with strict template use favours vendors with mature WhatsApp integrations and predictable per-message pricing.

- Language breadth — Supporting 10+ languages nudges teams toward platforms with built-in detection and translation or an easy way to plug in a translation API. Consider latency and privacy implications of routing messages through third-party translators.

- Automation complexity — If your bots need to recognise locale-specific intents (e.g., payment terminology that varies by country), evaluate the NLU’s language support rather than only the bot-builder UX.

- Reporting needs — If you track language-level CSAT, multilingual NPS and channel-level deflection, ensure the vendor can attribute and report across those dimensions out of the box or via robust data exports.

Next steps I recommend when you’re shortlisting vendors

- Run the matrix with real weights based on your priorities and expected volume by channel/language.

- Request a 14–30 day pilot focused on your most common language flows and channels — push real conversations through bot + human handover scenarios.

- Test translation flows with privacy and latency in mind (machine translate then human edit vs agent-side translation tools).

- Validate API quotas and webhook reliability during the pilot — integrations usually break the plan if you don’t stress them early.

- Ask for a clear price schedule for adding WhatsApp, voice, or additional languages — small add-ons can compound quickly.

If you want, I can adapt the matrix above to your specific channel mix, volumes and language list — I often turn these into a short scoring workbook teams can use during vendor demos. Tell me your top 3 languages and expected monthly conversation volume and I’ll produce a tailored scorecard you can share with procurement and engineering.