When a chatbot hands a conversation off to a human and the customer leaves frustrated, nobody wins. Yet failed handoffs are common — unclear context, long wait times, repeated questions, and agents who lack the right information. Over the past decade I've helped teams turn those moments from pain points into measurable CX wins. Here’s a sprint-ready playbook you can run in two weeks to convert failed chatbot handoffs into CSAT improvements you can quantify and repeat.

Why focus on failed handoffs?

Failed handoffs are low-hanging fruit. They sit at the junction of automation and human support where small changes yield big improvements in customer satisfaction and operational metrics. Fixing handoffs reduces repeat contacts, shortens resolution time, and raises CSAT — often with minimal engineering work. In most support stacks I’ve audited, handoff failures are responsible for a disproportionate share of poor survey scores.

What “sprint-ready” means here

By sprint-ready I mean a focused, cross-functional two-week effort that produces deployable changes, a measurement plan, and observable CSAT improvement. You don’t need months of roadmap refinement or a full replatforming. You need a clear hypothesis, lightweight tooling adjustments, agent playbooks, and a short A/B test or phased rollout.

Before you start: gather these ingredients

Week 0 — Rapid discovery (1–2 days)

Do a focused audit to find the worst offenders.

This step should produce a prioritized list — pick the top 1–2 failure modes that account for ~60% of poor outcomes.

Sprint plan: two weeks (high level)

The sprint has four parallel tracks: Quick technical fixes, agent playbook & training, customer messaging tweaks, and measurement. All four must move together to capture CSAT impact.

Day 1–3: Implement quick technical fixes

Focus on changes you can ship without major architecture work.

Example: for a company using Intercom + Zendesk, a 2-hour automation can post the last 5 bot messages and the predicted intent into the Zendesk ticket body via webhook. That alone prevents an agent from asking "What did the bot say?"

Day 4–6: Create an agent handoff playbook and short training

Write a one-page script and run a 30–45 minute roleplay session with agents. Keep it practical.

Run two mock handoffs per agent and capture improvement areas. Make the playbook visible in the agent UI (a Confluence page, Slack shortcut, or an internal KB snippet).

Day 7–10: Improve customer-facing messaging and routing

Small wording changes in the bot and proactive time-to-agent guidance reduce frustration.

These steps cut perceived wait time and lower the chance of customers abandoning the chat.

Day 11–14: Measurement and A/B test

Deploy changes to a percentage of traffic (25–50%) or a subset of intents. Track outcomes daily and be ready to roll back if needed.

| Metric | Baseline | Target (within 2 weeks) |

|---|---|---|

| Handoff CSAT (avg) | 3.4 / 5 | +0.5 |

| Repeat question rate | 28% | -40% |

| Abandonment after handoff | 12% | -50% |

What to expect in results

From multiple sprints I’ve led, the fastest wins come from sharing context and setting expectations. Teams commonly see a 0.3–0.7 CSAT point lift within two weeks, and a 30–60% reduction in agent re-asks. If you pair that with routing improvements, you can also cut handle time and lower repeat contacts.

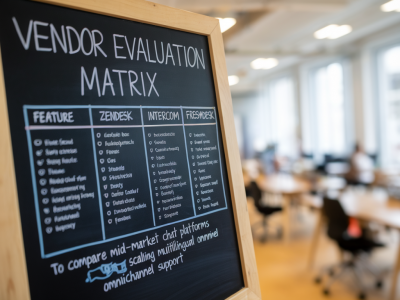

Real-world examples & vendor notes

I once worked with a fintech where the bot repeatedly handed off high-risk verification queries. We added the last three bot messages and the KYC step already completed to the agent ticket. Agents stopped re-asking routine verification and were empowered to resolve in one touch. CSAT rose by 0.6 points and average handling time dropped 22%.

Tools that make this easier: Zapier or Workato for rapid transcript forwarding, Segment for passing customer context into agent tools, and bot platforms like Rasa or Dialogflow that expose webhook payloads. If you're on Zendesk, use Sunshine Conversations or app extensions to include bot transcripts directly in the ticket view.

Common pitfalls and how to avoid them

Next steps after the sprint

If the initial test shows improvement, expand the changes across more intents, bake the playbook into onboarding, and consider automating the key elements: automatic context attachments, intent-based routing, and post-resolution bot updates that confirm the outcome. Over time, use root-cause tagging on failed handoffs to prioritize upstream bot improvements.

Fixing failed handoffs is one of the highest-impact, lowest-friction improvements you can make in a modern support stack. With a focused two-week sprint, the right context passing, clear agent guidance, and tight measurement, you can convert those pain points into consistent, measurable CSAT wins your team can celebrate and scale.